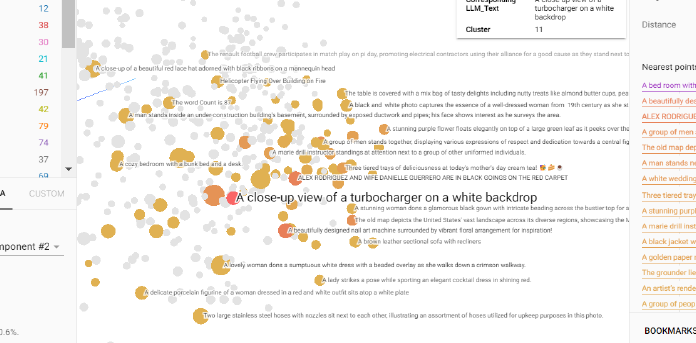

Screenshot of reduced embeddings

Two low dimensional data representation

Mathematical formula for Trustworthiness

Mathematical formula for Continuity

Geodesic distance between A and B

Cosine Similarity between Vectors

Linear Regression

Euclidean Distance Formula

An overview of CLIP's multimodal embeddings architecture

|

|  |

|  |

| ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| **Tensorboard visualization of PCA reduced Image embedding labelled with text.** | **Two electronic items image and text are close, quite good!** | **The embeddings look reasonable but on closer look checking 2 close image embeddings and corresponding texts, there seems disparity between them.** |

To delve deeper into the problem using similarity scores, we focused on a few specific examples.

Let's examine the test images, categorized as follows:

1. People posing for a photo

2. Home decor and furniture

3. Motorbikes and cars

| People Posing | Home Decor | Motorbike and Car |

| ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

|

| ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| **Tensorboard visualization of PCA reduced Image embedding labelled with text.** | **Two electronic items image and text are close, quite good!** | **The embeddings look reasonable but on closer look checking 2 close image embeddings and corresponding texts, there seems disparity between them.** |

To delve deeper into the problem using similarity scores, we focused on a few specific examples.

Let's examine the test images, categorized as follows:

1. People posing for a photo

2. Home decor and furniture

3. Motorbikes and cars

| People Posing | Home Decor | Motorbike and Car |

| ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|  |

|  |

|  |

|

|

|  |

|  |

|  |

|

|

|  |

|  |

|  |

Now, what do you think the image embeddings would reveal? Which images would be closer in similarity? You might initially think, "This is easy, I've got it!"—but that’s not the case.

Consider an image that appears to show fitness equipment on a house floor. The corresponding text reads, **"A black fitness mat lies on the ground beside a black kettlebell."**

|

Now, what do you think the image embeddings would reveal? Which images would be closer in similarity? You might initially think, "This is easy, I've got it!"—but that’s not the case.

Consider an image that appears to show fitness equipment on a house floor. The corresponding text reads, **"A black fitness mat lies on the ground beside a black kettlebell."**

Visual representation of image embeddings in 3D space

Euclidean distance metrics of embeddings wrt. Fitness mat image